GPU grant at Wigner Research Centre for Physics

Detection of the songs of collared flycatcher (Ficedula albicollis) with the help of deep neural networks

In the last two decades, many bioacoustic projects were conducted in the Department of Systematic Zoology and Ecology (Eötvös Loránd University). The works mainly related to the study of the structure and function of birdsong, the echolocation of bats and the development of passive acoustic methods to measure biological diversity in nature.

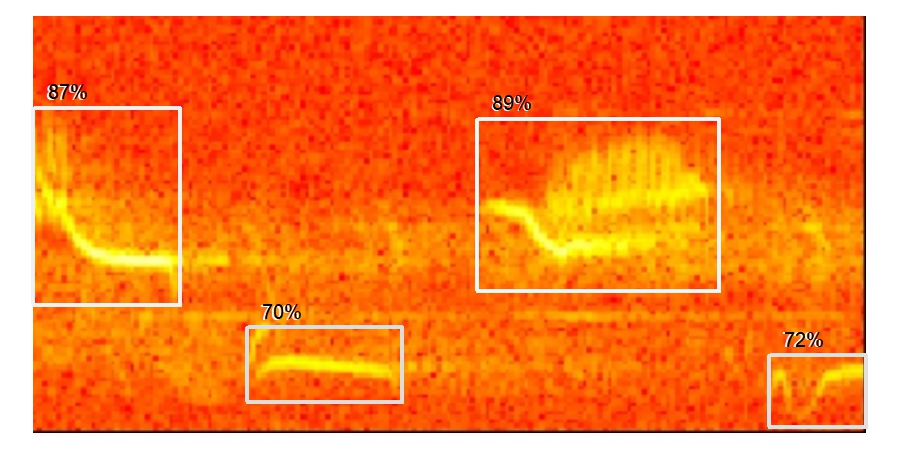

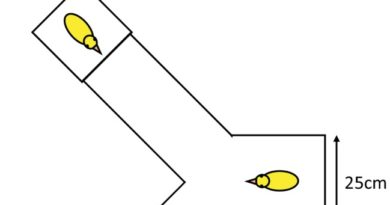

In our current research, we study the cultural evolution of the song of collared flycatcher based on large amount of acoustic data recorded in the nature. For that, first we have to find the songs in the recordings, then we have to segment the smallest units in the song (so called syllables), and at the end we have to cluster the syllables resulting a universal syllable library. This is a very time-consuming process that is intended to be done by computers. For that we would like to teach Deep Neural Networks. We possess hundreds of hours of recordings, thousands of songs and more than 150.000 manually segmented and clustered syllables. We would like to teach convolutional networks to detect the songs and syllables on raw recordings based on their spectrographic representations. This teaching process needs large capacity GPUs to be effectively done through parameter tuning. We would like to use the ready models for predicting songs and syllables in new recordings. Both this prediction and the clustering of syllables can be done on personal computers, so the application would only call for the training of the deep neural nets. The developing these models would facilitate all the projects that can be done only on large amount of collared flycatcher songs, and so it would open up new scientific directions to answer questions related to the evolution of animal acoustic communication.

Thanks the Wigner Lab for the opportunity!

And some nice preliminary results: